Machine Learning Model Monitoring in Process Industry (Post Deployment)

Machine learning by definition is a relationship which is established between a set of input variables and an output variable. Specifically, in process industry identification of this relationship becomes a difficult task, as it becomes highly non-linear at cases. The internal dynamics and behavior of the operator operating the process is something that comes under the interest of the ML/AI models. It tries to capture all of such instances which can be realized through the data it has been exposed to.

What’s Drifting in your Process – Data or Concept?

In this article, we are going to look into a very interesting and important concept of data-driven/Machine learning techniques. With the rapid development in technologies, industries have figured out multiple ways to estimate the performance of their deployed Machine learning/AI solution. One among them is drift – data drift and concept drift. Today, almost every industry is sitting on their-own data mine, longing enough to extract whatever information they could derive out of it. But, with such a surge in the industrial applications, it has also brought to our knowledge that there are lot many challenges involved at various levels of implementation. These challenges start right from the data itself – Integrity of the data, behavior and distribution of the data and so on. Sometimes, we end up spending most of our time in developing the process model (Machine learning model), which performs too well on the training dataset, but when tested on the live data- its performance drastically reduces. What do you think, what could have gone wrong here? Are we missing something or are we missing a lot? We shall pick this again later, with more details to it.

Types of Model Drift in Machine Learning

Data Drift & Concept Drift:

Now let’s talk about data drift and concept drift. Data drift is a very general terminology, which has a common interpretation across, whereas, concept drift is something which makes us think/re-think about the underlying domain know-how. Today, whoever thinks of starting their digitalization journey has a very fundamental question in their mind – whether or not the data is sufficient to build the machine learning model? The answer to this could be both yes and no, and it really depends on the methodology and the assumptions one had made while developing the model. Let us try to understand this with a simple example.

Data Drift:

Let’s assume you have used a standard scaler in one of your predictive maintenance or quality prediction or any other similar Machine learning/AI project. Which means that, for all the data points you’re transforming your sensor data/failure data/quality data based on the below equation:

Essentially we are transforming our dataset in such a way that for every process parameters in X has a mean of 0 and standard deviation of σ. Before we move ahead, one has to be conceptually clear about the difference between the sample and the population. Population is entire group of possibilities of scenarios, whereas the sample is the subset of the population. Generally, we assume that no two samples drawn at random are different from each other. Which means that we also assume that the mean and SD of the population is equal to that of any random sample which we draw from the entire dataset.

To understand it in much better way, let us take the example of the Heat exchanger predictive maintenance. The population dataset is the entire dataset inclusive of the process parameters, downtimes, maintenance records right from the day 0 of the process. The sample of the dataset could be the last 1 year data. One of the reasons why we are selecting only last 1 year data could be that – maybe the data is not available for the process since the beginning, as it was not stored. So, one is forced to assume that the mean and SD of the past 1 year data is representative of the entire span, which could be a wrong expectation.

Let’s say you have developed the machine learning model on top of this transformed dataset, with an acceptable level accuracy for training/validation/cross validation dataset, and deployed the model in real-time exposed to the live data. Here the model will transform the new data with the same mean and SD which was used at the stage of training period. And, there is a high chance that the behavior (mean and SD) of your new dataset is very different than what you had estimated using the training dataset. This scenario will ultimately cause the performance degradation in your deployed model. This is what data drift means in process industry. The reason for this could be insufficient data in the training set, or any other on similar lines. Same thing applies to multiple scenarios like – MinMax Scaler, or any other scaler. MinMax scaler is based on the minimum and maximum value observed from the dataset, which could be completely different among the training, validation and testing sample dataset.

In the above example, we used the impact of scaling techniques to demonstrate the data drift, but like this, there could be multiple reasons for data drift, which brings in the requirement for not only looking into the model performance metrics but also into the data itself in a prudent way.

Concept Drift:

This happens when the concept, over the course of time changes. Which essentially means that the process data model (Machine learning) has yet not learnt the exact physics from the data? The reason for this could be multiple, such as insufficient volume of data considered for the training purpose. For Example, during the stage of step test in the APC implementation one may end up with an incomplete set of dataset, which could be misleading, as not all scenarios were considered for the learning purpose. Point to note here is that model is able to predict only those scenarios over which it was trained. So, if for some reason the training dataset doesn’t consist of certain specific scenarios, then model is susceptible to misinterpret and mislead the predictions. Let us continue with the example of heat exchanger, where the failure could be due to corrosion (A), mechanical issues (B), or improper maintenance (C). Now let’s assume that the training data consisted of the process parameters, failure logs and others contained only the information of the first 2 failure codes. So it still doesn’t know that there could be a possibility of failure due to improper maintenance (C). So, when deployed, model wouldn’t even predict C, even though there was an actual C. Also, we would have got a training, validation accuracy of more than 90/95%, but it was only considering the binary outcomes – A or B. By now, you must have had realized that even though the model performed so well while training, but during go-live it misinterpreted and misclassified the outcomes over which it was not trained upon. The essentially makes to rethink about the concept (scenarios) which we expected it to predict, but we didn’t feed it.

Fig-1. (a) Represents the good fit for training on dataset where relative humidity is centred around 50, (b) Represents the incorrect predictions due to drift in the values of relative humidity

Another classical example could be that of a process where we have temperature, pressure, flow, level, volume parameters, and we intend to predict the quality variable Y in real-time. Now, assume that in the training/validation dataset the variability of level and volume is not observed, which makes the machine learning model to assume that these parameters remain almost constant. (Assuming we are focusing on the production scale process, which is a set process, where the volume or the level doesn’t change appreciably during the operational period). So, by nature the model will set less weightage to these parameters, and will by default give them the least weightage. But from physics, we may know that there is a huge impact of level and volume on Y. But since the mathematical model doesn’t have this intellect, it will drop these parameters for prediction, and when we have a scenario of a volume or level change, this model will misinterpret the relationship and will predict the wrong outcomes. To counter such challenges, we have a variety of routes to bring this intelligence into the model, of which one can be as simple as gathering more and more data, until and unless all of the required scenarios are captured. Or, one can generate the synthetic data using steady-state, dynamic simulations which could one of the closest approximations to the real-life scenarios. Or, one may plan to set the first-principle constraint on the outcomes of the model, which can ensure that fundamentals of physics are not violated by any means.

We hope this article will help you to nurture and accelerate your process data model in a more refined way.

- Published in Blog

How Seeq enables the Practice of MLOps for Continuous Integration and Development of the Machine Learning Models

Introduction:

Due to the rapid advancement in technology, the Manufacturing Industry has accepted a wide range of digital solutions that can directly benefit the organization in various ways. One of which is the application of Machine Learning and AI for predictive analytics. The same industry which earlier used to rely on MVA and other statistical techniques for inferencing the parametric relationship has now headed towards the application of predictive models. Using ML/AI now they have enabled themselves to not only understand the importance of parameters but also to make predictions in real-time and forecast the future values. This helps the industry to manage and continuously improve the process by mitigating operational challenges such as reducing downtime, increasing productivity, improving yields and much more. But, in order to achieve such continuous support for the operations in real-time, the underlying models and techniques also need to be continuously monitored and managed. This brings in the requirement of MLOps, a borrowed terminology from DEVOps that can be used to manage your model in a receptive fashion using its CI/CD capabilities. Essentially MLOps enables you to not only develop your model but also gives you the flexibility to deploy and manage them in the production environment.

Let’s try to add more relevance to it and understand how Seeq can help you to achieve that.

Note: Seeq is a self-service analytics tool that does more than modeling. This article is assuming that the reader is familiar with the basics of the Seeq platform.

Need for Seeq?

Whenever it comes to process data analytics/modeling, visuals become very much important. After all, you believe in what you see, right?

To deliver quick actionable insights, the data needs to get visualized in the processed form which can directly benefit the operations team. The processed form could be the cleaned data, derived data, or even the predicted data, but for making it actionable it needs to be visualized. The solutions should peacefully support and integrate with the culture of Industry. If we expect the operator to make a better decision then we also expect the solution to be easily accepted by them.

MLOps in Seeq

For process data analytics models could accept various forms such as first principle, statistical or ML/AI models. For the first two categories, the management and deployment become simple as it is essentially the correlations in the form of equations. Also, it comes with complete transparency, unlike ML/AI models. ML/AI on the other hand is a black-box model, adds a degree of ambiguity and spontaneity to the outcomes, which requires time management and tuning of the model parameters. This could be either due to the data drift or the addition/removal of parameters from the model inputs. To enable this workflow Seeq provides the following solution:

- Model Development:

One can make use of Seeq’s DataLab (SDL) module to build and develop the models. SDL is a jupyter-notebook like interface for scripting in python. Using SDL you get the facility to access the live data to select the best model and finally create a WebApp using its AppMode feature for a low-code environment. As a part of best practice, one can use spy.push method to extract the maximum information out of the model using Seeq Workbench and advanced Visualization capabilities.

- Model Management (CI/CD):

Once the model is deployment-ready, the python script for the developed model can be placed in a defined location in the server for accessing the production environment. After successful authentication and validation, the model can be seen to have visibility in its list of connectors. This model can then be linked with the live input streams for predicting the values in real-time.

- Visibility of the Model:

Once the model is deployed in the production environment, one can continuously monitor the predictions and get notified of any deviations which may be an outcome of data drift. The advanced visualization capabilities of Seeq enables the end-user to extract maximum value/information out of the data with the ease and flexibility of its use.

For a better deployment and utility of MLOps, we recommend you apply visual analytics for your data and model workflow. Visual analytics at each stage of the ML lifecycle provides a capability to derive better actionable insights which could be easily scaled and adopted across the organization for orchestrating the siloed information and to unify them for an enhanced outcome.

Innovate your Analytics

I really hope that this article helped you to benchmark your strategy for deriving the right analytical strategy in your Industrial Digitization journey. For this article, our focus was on how Seeq can support the easy implementation of MLOps using Industrial manufacturing data.

Who We Are?

We, Process Analytics Group (PAG), a part of Tridiagonal Solutions have the capability to understand your process and create a python based template that can integrate with multiple Analytical platforms. These templates can be used as a ready-made and a low code solution with the intelligence of the process-integrity model (Thermodynamic/first principle model) that can be extended to any analytical solution with available python integration, or we can provide you an offline solution with our in-house developed tool (SoftAnalytics) for soft-sensor modeling and root cause analysis using advanced ML/AI techniques. We provide the following solutions:

- We run a POV/POC program – For justifying the right analytical approach and evaluating the use cases that can directly benefit your ROI.

- A training session for upskilling the process engineer – How to apply analytics at its best without getting into the maths behind it (How to apply the right analytics to solve the process/operational challenges)

- Python-based solution- Low code, templates for RCA, Soft-sensors, fingerprinting the KPIs, and many others.

- We provide a team that can be a part of your COE that can continuously help you to improve your process efficiency and monitor your operations on regular basis.

- A core data-science team (Chemical Engg.) that can handle the complex unit processes/operations by providing you the best analytical solution for your processes.

Written by,

ParthPrasoon Sinha

Sr. Data Scientist

Tridiagonal Solutions

- Published in Blog

Statistical and Machine Learning for Predictions and Inferences – Process Data Analytics

Introduction:

When it comes to the process industry, there are a plethora of operational challenges, but not a single standard technique that can address all of these. Some of the common operational challenges include identification of critical process parameters, control of process variables and quality parameters, and many more. Every technique has its own advantages and disadvantages, but to make its use at – its best, one should be aware of “What to use“, “And When”? This is really an important point to consider as there are so many different types of models that can be used for a “fit-for-requirement” purpose. So, let us try to have a deeper view of the modeling landscape.

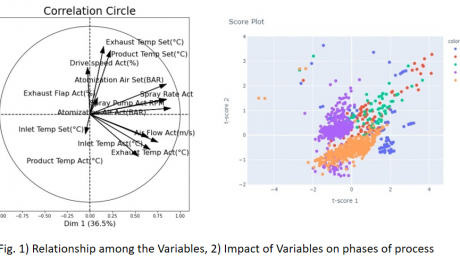

Statistical models in the Process Industry

Statistical models are normally preferred when we are more interested in identifying the relationships among the process variables and output parameters. These kinds of models involve hypothesis testing for distribution analysis, which helps us to estimate the metrics, such as mean and SD of the sample and population. Whether or not, your sample is a generalized representation of your population? This is an important piece of concept which goes as the initial information for any model building exercise. Z-test, chi-squared, t-test (Univariate and multivariate), ANOVA, least squared and many other advanced techniques can be used to perform the statistical analysis, and estimate the difference in the sample and population dataset.

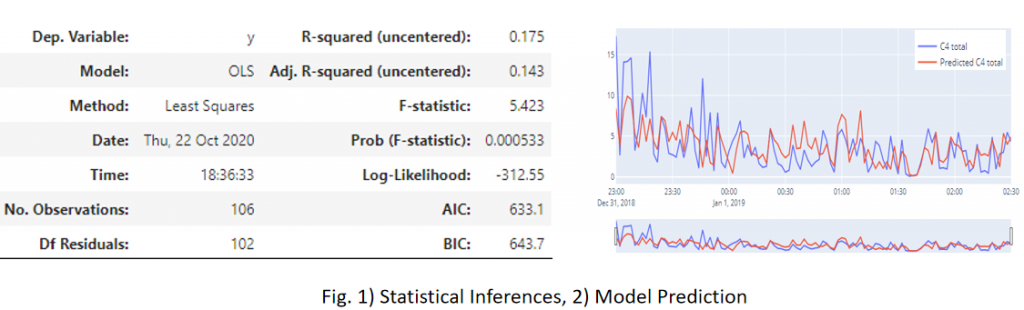

Let us try to understand this with an example: Considering the process of distillation, let’s say that you have the dataset available for its Feed Pressure, Flowrate, Temperature and Purity of the top stream. Now from its availability perspective, assume that you have 1 year’s worth of the data. Then, you segregated your dataset in some ratio, let’s go by 7:3 for training and testing purposes, and applied some model. But the model didn’t seem to comply with your expectations, in terms of the desired accuracy. So, what could have gone wrong? There are many possibilities, right? For the interest of this article let us just focus on the statistical inferences. So, since your model was not able to generalize its understanding on the distillation dataset, we may want to set an inquest for the dataset first. How? Did we compare the mean and SD of the train and test dataset? No? Then we should!! As discussed above, that to set up a model for reliable predictions, we need to be sure that the sample (In this case-training and testing dataset) is representative of your population dataset. This means that mean and SD values should not see much significant change in the above 2 datasets and also when compared to any random samples drawn from the historical population dataset. This also gives to us an idea about the minimum volume of the dataset which should be required to estimate the model’s robustness and predictability. Also to support the predictability confidence of any parameter on the output variable, we can use p-value. It essentially shows the statistical significance/feature importance of the input parameters.

Machine Learning Models in the Process Industry

So, now we have some idea on the importance of statistical models using process data. We can know the parametric relationship among each other and quality parameters. And then using the Machine learning models we can enable the predictions.

Typically, what we have seen in any process industry is, they need correlations and relationships in the form of an equation. A black-box model sometimes adds more complexity in the practical applications, as it does not provide any information on how and what was done to establish the prediction.

Sometimes even a model with 80% accuracy is sufficient if it provides enough information on the relationship is established among the process parameters. This being the reason, first, we always try to fit a parametric model, such as a linear or a polynomial model with the desired scale on the dataset. This atleast gives us a fair understanding of the amount of variability and interpretability contained in each of the paired parameters.

Conclusion

We can say that predictions and inferences/interpretability should always go hand-in-hand in the process industry for a visible and reliable model. The prediction itself is not sufficient for the industry, they need more, like – if the predicted value is not optimal or if it does not meet standard specifications, then what should be done? A recommendation or a prescription in the form of an SOP that the operator or an engineer can follow to bring the process back to its optimal state, which comes from the statistical inferencing. We suggest that for a strategic digitalization deployment across your organization, you use the best of both techniques.

To know more on how to use these techniques for your specific process application, kindly contact us.

Who We Are?

In case you are starting your digitalization journey or being stuck somewhere, we (Process Analytics Group) at Tridiagonal solutions can support you in many ways.

We run the following programs to help the industry along with various needs:

- We run the POV/POC program– For justifying the right analytical approach and evaluating the use cases that can directly benefit your ROI.

- A training session for upskilling the process engineer – How to apply analytics at its best without getting into the maths behind it (How to apply the right analytics to solve the process/operational challenges)

- Python-based solution– Low code, templates for RCA, Soft-sensors, fingerprinting the KPIs, and many others.

- We provide a team that can be a part of your COE– That can continuously help you to improve your process efficiency and monitor your operations on regular basis.

- A core data-science team (Chemical Engg.) that can handle the complex unit processes/operations by providing you the best analytical solution for your processes.

Written by,

ParthPrasoon Sinha

Sr. Data Scientist

Tridiagonal Solutions

- Published in Blog

Hail Machine Learning Models, but sometimes you’re Precarious!!

Good morning, good afternoon or evening to all. Pick the one which belongs to you!

So, before we get to the centerpiece of the article, just want to set some context. The focus of this article is not too technical, nor too generic, but this article is dedicated to all among us who in some way or the other is related to the field of digitalization in the manufacturing industry.

Sometimes we are too much taken by the technicality of the problems, that we stop thinking about it in a crude engineering way. Don’t you feel so?

Sometimes, it’s suitable (and a need too!!) – to rethink the objective and solve it by taking a logical approach, indeed a practical one.

Have you ever felt that, with the advent of the technology (ML and AI I mean!) we try to force-fit the models everywhere without any proper definition and evaluation of the requirements, needs or investments (ROI, in other words). But still not clear, right?

A fun fact, though I do not hold any statistics on this one, I still feel that – “The rate of an engineer transforming to a data scientist is higher than that of literacy rate itself.” Do you agree?

But, are we really making any practical use of this transformation? It’s an observation, that before we apply any model to the process data, we force ourselves to think like one, right? So we miss out on the logical apprehension or the practical mindset that goes behind it.

Hail Model! That’s right. Machine learning and AI have definitely empowered us to solve many engineering problems in any easy and comprehensive fashion, such as – Real-time predictions of the quality parameters, forecasting the next probable failure event for any asset(s), and many more. It has democratized the Industry in many ways, by detaching the long going dependency on the lab analysis in many operations, dependency on simulations to get the inflicting values of the quality parameters and many more. It has drastically reduced the time for getting the results much earlier, almost in real-time, which earlier took days to get generated from other siloed mechanisms.

But do you really think that these can solve all of your problems? No, right? Moreover, sometimes the infrastructure and technology cost behind such an application is huge, even more than ROI itself. So what should we do? Should we stop thinking about these applications? Or, should we wait till this cost plummets. The answer is a “No”. Then what should we do?

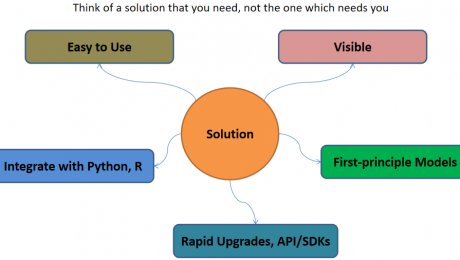

There needs to be a logical approach behind these, which means that someone has given us the power to use the technology, but how to use it, is up to us. In this case, the driver of the technology or the digitalization leaders have taken ownership of such programs. He/She should be well versed with the technology targeting the manufacturing industries. Plethora of solutions are available, so which one to select? This is the next big question, which connects our previous one. So the answer is that the solution should be such easy to use that even the operators or the engineers can learn it. Right? I mean what’s the use of such technology that doesn’t make your life easier. Correct!!

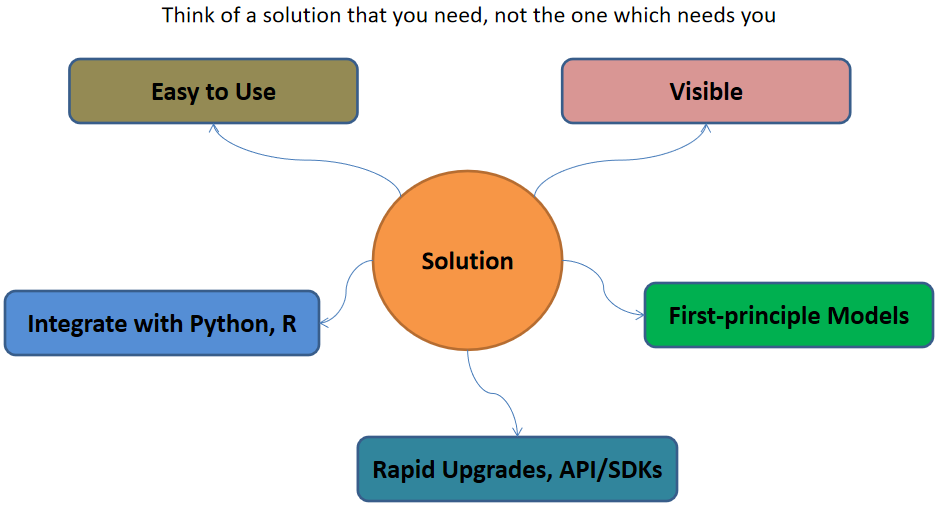

So, coming directly to the solution part, the evaluation criteria or the metrics to keep in mind, before investing, but after envisaging the requirements and need:

- Comprehensible: Simple, easy-to-use solutions – that can get into the hands of operators and engineers (The real industry drivers!!)

- Blend-able: Capability-wise – Easy to mingle with the open-source and widely accepted programming solutions, such as python, R and MATLAB

- Reform-able: Solution should be capable enough of upgrading itself with the uplifting of the technology. Or else, it will get outdated soon. This becomes a really important criteria as one always invests in keeping long-term goals in mind, and not the short ones.

- Visible: The solution should be capable of providing Visuals – be it in terms of trends in real-time or 3D CAD models, like the one in digital twin, which we all dream about.

- Though we say models are a black-box, we still want to see what it is doing, right? At least, what is it outputting? Operators don’t care about which model you apply or what technique you use, then just want to see what is happening inside that piece of equipment with a better view, that’s it.

- Solvable: We all are engineer’s correct? We want to solve equations and correlations, it’s our job. So the solution should be capable enough to allow you to solve some complex algebraic equations – we call it first principle models.

In case you are starting your digitalization journey or being stuck somewhere, we (Process Analytics Group) at Tridiagonal solutions can support you in many ways.

We run the following programs to help the industry along with various needs:

- We run the POV/POC program– For justifying the right analytical approach and evaluating the use cases that can directly benefit your ROI.

- A training session for upskilling the process engineer – How to apply analytics at its best without getting into the maths behind it (How to apply the right analytics to solve the process/operational challenges)

- Python-based solution– Low code, templates for RCA, Soft-sensors, fingerprinting the KPIs, and many others.

- We provide a team that can be a part of your COE– That can continuously help you to improve your process efficiency and monitor your operations on regular basis.

- A core data-science team (Chemical Engg.) that can handle the complex unit processes/operations by providing you the best analytical solution for your processes.

Written by,

ParthPrasoon Sinha

Sr. Data Scientist

Tridiagonal Solutions

- Published in Blog